Mar 24, 2026

ActionStreamer

What WebRTC Actually Means for Field Operations

When a HAZMAT technician is 200 feet into a structure and the incident commander needs eyes on what they're seeing, "low latency" stops being a technical specification. It becomes the difference between a good call and a bad one.

Most video tools were designed for conference rooms. They were built assuming stable Wi-Fi, stationary cameras, and users who can wait two seconds for a stream to catch up. Field operations don't offer any of those conditions. What they need is something built differently, and WebRTC, when it's implemented correctly, is the closest the industry has gotten.

Here's what it actually means for the people running operations on the ground.

WebRTC in plain language

WebRTC is not an app or a product. It's an open protocol standard, a set of rules baked into modern browsers and devices that governs how audio and video travel between endpoints in real time.

You've used it without knowing it. Google Meet runs on it. So does WhatsApp video. The reason it became the standard for real-time communication is that it was designed from the start for low latency, works without proprietary plugins, and encrypts everything by default.

What it is not is a streaming delivery protocol. RTMP and HLS, the formats behind most live streaming, were designed to push video from a source to a large audience with acceptable delay. They're one-way pipes. WebRTC is a two-way channel, which changes what you can do with it entirely.

The only metric that matters in the field

There are two meaningfully different categories of video latency, and the difference between them is operational, not just technical.

"Low latency" in broadcast terms means two to four seconds of delay. For a sports stream or a news feed, that's fine. Nobody is making a split-second decision based on what they see.

"Real-time" means under 500 milliseconds, the threshold at which the human brain stops perceiving a gap between an action and its representation on screen. At that latency floor, a remote expert can direct a technician's hands through a repair. An incident commander can call a tactical decision based on what a team member is actually seeing, right now. A remote operator can guide someone through a procedure without the conversation feeling like a satellite phone call.

WebRTC is architecturally capable of hitting that threshold. Older protocols aren't, regardless of how much bandwidth you throw at them. The delay isn't a tuning problem. It's baked into how the protocols buffer and deliver data.

Where standard WebRTC breaks down

Here's where most vendor conversations get dishonest. WebRTC was designed for peer-to-peer browser calls between two people on reliable internet connections. Drop it into a field environment without significant engineering layered on top, and it fails in predictable ways.

Intermittent connectivity is the first problem. LTE drops, mesh network gaps, and dead zones inside structures don't give WebRTC graceful options if it's implemented naively. Streams freeze, buffers fail, and connections drop entirely rather than degrading smoothly.

Scale is the second. Peer-to-peer WebRTC works fine for two people. Add a third or fourth stream and the model starts to break. An incident commander monitoring six team members simultaneously on a direct peer-to-peer architecture is asking for something WebRTC's original design wasn't built to handle.

High-movement wearables add a third layer of complexity. The compression and encoding assumptions that work for a webcam on a desk fall apart when the camera is mounted on a helmet moving through a structure.

None of this means WebRTC is the wrong answer. It means raw WebRTC isn't the answer. What's built on top of it is.

What a field-ready WebRTC implementation actually requires

The difference between WebRTC that works in a conference room and WebRTC that works in a denied or degraded environment comes down to a few architectural decisions.

The first is moving away from peer-to-peer toward a media server model, specifically a Selective Forwarding Unit (SFU). An SFU sits in the middle of the stream, routing video efficiently to multiple recipients without requiring each device to maintain direct connections to every other device. This is what makes multi-party field video actually scalable.

The second is adaptive bitrate handling. When bandwidth drops, a well-implemented system degrades the stream quality rather than killing the stream. Lower resolution is operationally useful. A frozen or dropped feed is not.

The third is store-and-forward capability. In genuinely disconnected environments, real-time streaming isn't always possible. The system needs to buffer locally and sync when connectivity returns, without losing the data that was captured during the gap.

The fourth, and the one most vendors miss, is sensor data alongside video. GPS position, environmental telemetry, atmospheric readings: these belong in the same stream as the video feed, not in a separate system that someone has to mentally reconcile with what they're watching.

ActionSync Connect: WebRTC built for the frontline

ActionStreamer built ActionSync Connect specifically to close the gap between what WebRTC promises and what field environments actually demand.

ActionSync Connect is a wearable live streaming and video conferencing platform designed for remote assist workflows. Where consumer-grade video tools assume a stable connection and a stationary camera, ActionSync Connect is built around the opposite: body-worn devices moving through complex environments, networks that drop without warning, and teams that need to stay coordinated across multiple simultaneous streams.

On the streaming side, ActionSync Connect handles the SFU architecture, adaptive bitrate management, and store-and-forward fallback that raw WebRTC doesn't provide out of the box. On the conferencing side, it enables the remote assist model that field operations actually need: a technician wearing a camera, a remote expert watching that feed in real time, and two-way communication that lets the expert guide the work as it's happening.

The platform runs across the wearable hardware ActionStreamer supports, including helmet-mounted and body-worn cameras designed for industrial and public safety environments. It sits on top of ActionStreamer's broader ActionSync media layer, which means video, audio, and sensor data travel together in a single pipeline rather than being stitched together after the fact.

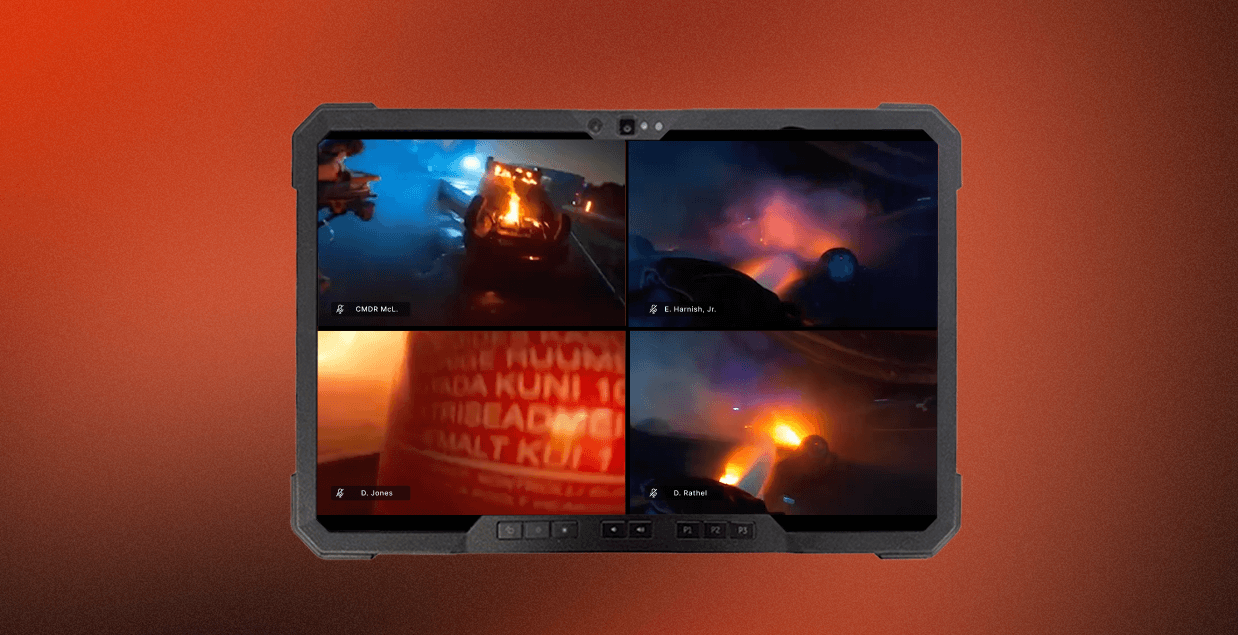

For incident commanders, maintenance supervisors, and tactical operations centers, that means one interface showing what each team member sees, with the latency profile that makes the feed operationally useful rather than just visually interesting.

Where this plays out in practice

In HAZMAT response, an incident commander watching a technician move through a contaminated space needs to see what the technician sees, with enough fidelity and low enough latency to redirect them before they make contact with a hazard. A two-second delay makes that functionally impossible.

In maintenance, repair, and overhaul operations, a remote expert watching a technician work on an aircraft component can only guide a torque sequence or a procedure in real time if the video is actually real time. HLS-based streaming at four seconds of delay turns that into a guessing game.

In tactical operations, a team wearing body cameras streaming back to a TOC is only useful if the TOC is seeing what's happening now, not a curated replay of what happened four seconds ago.

Questions worth asking any vendor

If you're evaluating a platform that claims WebRTC capability, the questions that matter are:

What is your latency floor under degraded network conditions, not ideal lab conditions?

Do you use a peer-to-peer model or a media server architecture?

When connectivity drops, does the stream degrade gracefully or fail hard?

Can you stream sensor data alongside video in the same pipeline?

How many simultaneous streams can an incident commander or TOC monitor without the system degrading?

The answers will tell you whether the implementation was designed for field use or adapted from something that wasn't.

The bottom line

WebRTC is the right foundation for field video. The sub-second latency, the encryption, the absence of proprietary dependencies: those properties matter in operational environments in ways they don't in a sales demo.

But the foundation is only as good as what's built on it. The field environment is not a conference room, and the gap between a WebRTC implementation that knows that and one that doesn't shows up exactly when it's most expensive: when someone is making a call based on what they're seeing, and what they're seeing is four seconds old.